Stylized Adversarial Defense

Apr 1, 2022· ,,,,·

0 min read

,,,,·

0 min read

Muzammal Naseer

Salman Khan

Munawar Hayat

Fahad Shahbaz Khan

Fatih Porikli

Abstract

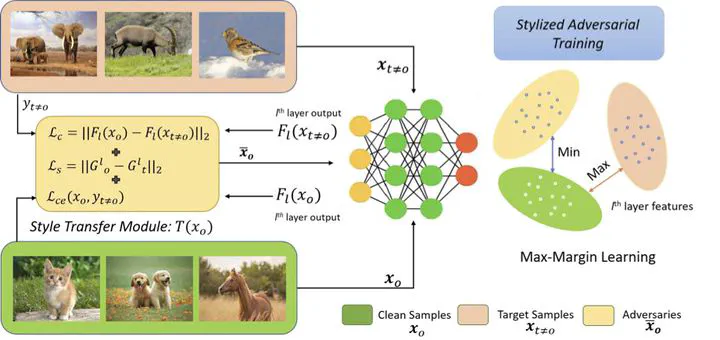

Deep Convolution Neural Networks (CNNs) can easily be fooled by subtle, imperceptible changes to the input images. To address this vulnerability, adversarial training creates perturbation patterns and includes them in the training set to robustify the model. In contrast to existing adversarial training methods that only use class-boundary information (e.g., using a cross-entropy loss), we propose to exploit additional information from the feature space to craft stronger adversaries that are in turn used to learn a robust model. Specifically, we use the \emph{style} and \emph{content} information of the target sample from another class, alongside its class-boundary information to create adversarial perturbations. We apply our proposed \emph{multi-task} objective in a deeply supervised manner, extracting multi-scale feature knowledge to create maximally separating adversaries. Subsequently, we propose a max-margin adversarial training approach that minimizes the distance between source image and its adversary and maximizes the distance between the adversary and the target image. Our adversarial training approach demonstrates strong robustness compared to state-of-the-art defenses, generalizes well to naturally occurring corruptions and data distributional shifts, and retains the model’s accuracy on clean examples.

Type

Publication

In * Transactions on Pattern Analysis and Machine Intelligence , TPAMI 2022*